Workshop on Geometry compression

Sophia-Antipolis

november 6, 10:00 to november 7, 17:00 2003

Venue information Program

| Topics |

|

| Invited speakers |

On the optimality of some 3D mesh

compression methods

Markov Models for Optimal Arithmetic Coding

Arithmetic coding achieves provably optimal compression rates for all commonly used probability models, such as information sources with and without memory and the popular Markov Models. In a lot of applications, where an upper bound on the coding performance is necessary, the most difficult aspect is the design of a good probability model. This talk illustrates a new method to derive from a given state machine a Markov Model that encodes an output sequence from the state machine optimally in the limit for infinitely long sequences. With the new method the design problem is simplified to the design of a good state machine that enumerates all possible input sequences. For a commonly used triangle mesh connectivity coding method the design of such a state machine is shown and the application of the method allows for improving the upper bound for the coding performance.

Out-Of-Core

Processing of Huge Polygonal Meshes

|

Organization

The workshop is organized by INRIA collaborative action Telegeo.

Registration

Talks

François Cayre (ENST-TSI)

Spatial watermarking for authentication of 3D triangles meshes

We present a new watermarking scheme dedicated to the authentication of

3D models. Our scheme is naturally invariant to translation, rotation

and scaling of the model. Every other manipulation should erase the

watermark. We show how to achieve near-optimal embedding of the hidden

information using an indexed localization in the spatial domain. We also

give several bounds of the algorithm, including the Minimum Watermark

Segment (MWS), the actual length of the keys and the number of sites

used to embed the watermark. Our results will show the relevance of the

method against various manipulations.

Sébastien Valette, Alexandre Gouaillard and Rémy Prost (CREATIS)

Lossy and Lossless Multiresolution Mesh Compression via Wavelet

Representation

We present a new scheme for decomposition of 3D meshes Geometry by

Wavelet Decomposition. Based on an irregular subdivision scheme, this scheme

applies directly to irregular meshes, without the need for remeshing.

Associated with a compact connectivity scheme, we show how to achieve an

efficient progressive compression of 3D meshes. Two approaches of compression

are the described : progressive compression by geometry and connectivity

refinement, and progressive compression by geometry refinement. We also

present results obtained with both approaches on several reference models.

Stéphane Pateux, Nathalie Cammas (IRISA)

Meshes for video analysis and coding

We present a general approach for video analysis and representation

with 2D deformable meshes. A hierarchical dynamic triangular mesh structure

is estimated from the input image sequence through a global optimization

scheme. Using wavelet decomposition, the triangular mesh embeds both

texture and motion information along the video sequence.

Such a representation can be used for efficient scalable video coding

or for 3D estimation and modelisation.

Raphaèle Balter, Franck Galpin, Patrick Gioia, Luce Morin (IRISA)

3D Mesh processing for video coding

We present a video coding scheme based on 3D modelisation.

From a video sequence of a static scene viewed by a moving camera,

we extract a stream of textured 3D mesh models and camera positions.

They are coded using a scalable representation and used at the decoder

to regenerate the original video.

Such a scheme allows very low bit-rate compression as well as virtual

reality applications.

Benjamin Le Guen, Raphaèle Balter, Luce Morin (IRISA), Pierre Alliez (INRIA)

Morphing of estimated 3D models

We propose a morphing procedure between 3D mesh models, adapted to

the case of estimated 3D models from a video sequence.

Our goal is to obtain a visual continuity when reprojecting the 3D

models onto the video camera viewpoints. We use 2D parametrisation

and a mesh fusion procedure. We take into account the matching

constraints provided by the motion field estimated from the image sequence.

Gilles Schaeffer (LIX)

Compact coding of triangulations, an enumerative approach.

Pierre Alliez (INRIA)

Anisotropic Polygonal Remeshing

We propose a novel polygonal remeshing technique that exploits a key aspect of surfaces: the intrinsic anisotropy of natural or man-made geometry. In particular, we use curvature directions to drive the remeshing process, mimicking the lines that artists themselves would use when creating 3D models from scratch. After extracting and smoothing the curvature tensor field of an input genus-0 surface patch, lines of minimum and maximum curvatures are used to determine appropriate edges for the remeshed version in anisotropic regions, while spherical regions are simply point-sampled since there is no natural direction of symmetry locally. As a result our technique generates polygon meshes mainly composed of quads in anisotropic regions, and of triangles in spherical regions. Our approach provides the flexibility to produce meshes ranging from isotropic to anisotropic, from coarse to dense, and from uniform to curvature adapted.

Tony Tung (ENST)

3D object indexing using Multiresolutional Reeb Graph

We present a 3D indexing method for retrieving 3D models of objects in

dedicated databases. Among the various techniques in the literature, we have

chosen the Multiresolutional Reeb Graphs (MRG) approach presented in [Hilaga

et al, SIGGRAPH'01]. A Reeb graph is a unidimensional skeleton based on the

topology of the object. The function used to build the MRG is robust in case

of mesh modifications (noise on points, decimation, etc.). The comparison

scheme is multiresolutional and uses a coarse-to-fine approach. We provided

geometrical informations to the MRG to improve the matching of the graph for

similarity estimation of 3D objects.

Guillaume Lavoué, Florent Dupont et Attila Baskurt (LIRIS)

Curvature Based Triangle Mesh Segmentation with Boundary Rectification

We present a new and efficient algorithm for surface decomposition of

arbitrary triangle meshes, based on curvature information analysis. Our

method not only "cut" the object along its edges but decomposes it into

constant curvature surfaces, with a precision threshold given by the

user. Contrary to the existing methods, a post-processing allow to

obtain patches that have smooth and regular boundaries, and thus which

can be later fit with NURBS or subdivision surfaces in a

multi-resolution compression perspective.

Luca Castelli (LIX)

Canonical triangulation of a graph, with coding application

Frédéric Payan, Marc Antonini (I3S)

A Wavelet Geometry Coder including Normal Field Control

We present an original geometric wavelet coder for 3D meshes including a model-based bit allocation.

The proposed coder takes into account the high sensitivity of the surface-to-surface distance

to the normal information quantization. Indeed, the proposed bit allocation criterion is penalized by the

knowledge of the polar angle between the quantization error vector and the normal direction in a local

coordinate frame, and consequently permits the control of the error vector orientation. Then,

bits are allocated preferentially to the normal set favorizing the minimization of a surface-to-surface

distortion measure.

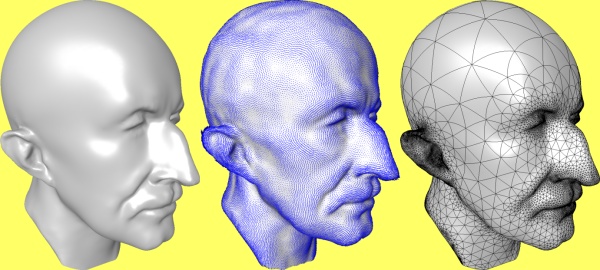

Carlos Hernandez Esteban (ENST)

Silhouette and Stereo Fusion for 3D Object Modeling

We present a new approach to high quality 3D object reconstruction.

Starting from a calibrated sequence of color images, the algorithm is

able to reconstruct both the 3D geometry and the texture. The core of the

method is based on a deformable model, which defines the framework where

texture and silhouette information can be fused. This is achieved by

defining two external forces based on the images: a texture driven force

and a silhouette driven force. The texture force is computed in two

steps: a multi-stereo correlation voting approach and a gradient vector

flow diffusion. Due to the high resolution of the voting approach, a

multi-grid version of the gradient vector flow has been developed. A new

formulation of the silhouette constraint is derived allowing its direct

integration in the evolution algorithm. Finally we use the Laplacian and

the biharmonic operators as internal forces which, together with a

multi-resolution deformable model, allow us to obtain high quality 3D

models.

Arnaud Gelas (CREATIS)

Curvature Based Remeshing of 3D Meshes

Multiresolution meshes are very attractive in many fields of research, such as Computer Graphics, Finite Element, and Medical Image Processing. There are two different ways to construct the mesh hierarchy, coarse to fine and fine to coarse methods. Our method is coarse to fine one, so it converts a mesh into a multiresolution one. Usual methods that are based on geometrical error, while the proposed method is an adaptive remeshing based on curvature information. We also show an interesting application of our remeshing method: compression of 3D Meshes.

Alexandre Gouaillard, Sebastien Valette, Remy Prost (CREATIS), in collaboration with Pierre Alliez (INRIA).)

Multiresolution mesh for 3D Segmentation

Segmentation of image and volumes proved to be more robust when an a-priori

model is used. Previous work proved that using a multire'solution

decomposition of the data AND of the model leads to 50 ( 3 levels of

decompositions) to 66 % ( 5 levels of decomposition) theoretical decrease in

time to converge to the same solution with the same Snake algorithm.

Previous work showed that, on real case, the decrease was closer to 90 % and

that the robustness was greatly improved in presence of noise.

Even if the same theoretical results can be achieved on 3D, building an

adequate multire'solution mesh for segmentation is a difficult task. The

number of vertices must stay as low as possible as the Snake algorithm's

complexity depends linearly on it. On the other hand, the mesh must be as

regular and uniform as possible for the discretization of the energy

function that drives the model's deformation to stand. Last but not least,

the multire'solution decomposition of the data tends to change the topology

of the approximation of the object , while the multire'solution decomposition

of a mesh never do. multire'solution decomposition of a mesh should allow

some topological changes for the model and the data at a given resolution to

be synchronised.

We will propose here a method to construct an as regular and uniform as

possible multiresolution mesh for segmentation purposes. Discussion about

how to include topological changes in a multiresolution decomposition

algorithm will be provided as a "work under progress" part.

Patrick Gioia (France Telecom)

Real-time adaptive reconstruction of Wavelet Encoded Meshes

We present a set of algorithms for real-time decoding and reconstruction of

meshes encoded using geometric wavelets. Wavelet compression of surfaces has

proven to be a powerful tool for lossy mesh coding, and is now part of the

MPEG-4 standard. While leading to impressive compression rates, the encoding

relies on quite complex transformations which make real-time decoding and

reconstruction not straightforward. The algorithms we propose allow

view-dependent streaming and real-time adaptive reconstruction of meshes of

arbitrary size. They can be used for scalable streaming of any medium with

underlying meshed objects, such as model-based video or finite elements for

motion compensation.

B. Levy (ISA), B. Vallet, A. Sheffer

Differentially Constrained Parameterization using Alternative Variables

These last few years, time and effort has been devoted to design

parameterization algorithms for mesh models, minimizing area or

angle deformations. Area-constrained and authalic

parameterizations are best suited to map a signal onto a surface, like in

texture mapping. Angle-preserving (i.e. conformal) parameterizations

show suitable properties for remeshing algorithms and PDE solvers. We present two new parameterization methods

that overcome some theoretical and practical limitations of previous

work. Our authalic parameterization DPBF directly optimizes the Jacobian

of the transform in dot-product space, which provides a finer

control on the use of texture space.

Our conformal parameterization ABF++ is a highly efficient

extension of the ABF method. In ABF++, algebraic transforms

dramatically reduce the dimension of the linear systems solved by ABF.

Instead of directly optimizing $u,v$ coordinates, both DPBF and

ABF++ use alternative variables, naturally adapted to the problem.

To reconstruct the $u,v$'s from those

variables, we propose LSAM, a new anisotropic generalization of

LSCM. Using LSAM, the $u,v$'s are globally optimized, which avoids

the numerical instabilities caused by accumulated errors in greedy

algorithms, such as ABF's reconstruction phase.

Nicolas Ray (ISA)

Hierarchical Least Squares Conformal Maps

A texture atlas is an efficient way to represent information (like

colors, normals, displacement maps ...) on triangulated surfaces. Our

LSCM method (Least Squares Conformal Maps) automatically generates a

texture atlas from a meshed model. For large charts (over 100k

facets), the convergence of the numerical solver may be slow. It is

well known that the conformality criterion, minimized by LSCM, also

corresponds to a harmonicity condition, meaning that barycentric

coordinates are locally preserved through the parameterisation. This

has two different consequences:

o cascadic multigrid methods (coarse to fine) are well

adapted to this criterion, and dramatically speed up

the convergence of the numerical solver ;

o the obtained parameterisation naturally minimises texture

swimming when used to texture-map a progressive mesh.

we introduce HLSCM (Hierarchical LSCM), a cascadic

multigrid version of LSCM. As an example of possible applications,

the paper shows how normal maps and simplified models can be

automatically generated from large scanned meshes. Using these normal

maps, the visual appearance of the model can be preserved more even

when 90% of the vertices are removed from the initial model.

Marco Attene, Bianca Falcidieno, and Michela Spagnuolo (IMATI-GE / CNR -

Italy),

Jarek Rossignac (GVU Center / GATECH - Georgia-USA)

Remeshing-based compression of manifold triangle meshes

We focus on the lossy compression of manifold triangle meshes. Our

SwingWrapper approach partitions the surface of an original mesh M into

simply connected regions, called triangloids. From these, we generate a new

mesh M'. Each triangle of M' is an approximation of a triangloid of M. By

construction, the connectivity of M' is fairly regular and can be compressed

to less than a bit per triangle using EdgeBreaker or one of the other

recently developed schemes. The locations of the vertices of M' are

compactly encoded with our new prediction technique, which uses a single

correction parameter per vertex. SwingWrapper strives to reach a

user-defined output file size rather than to guarantee a given error bound.

For a variety of popular models, a rate of 0.4 bits/triangle yields an L2

distortion of about 0.01% of the bounding box diagonal.

Due to their constrainded locations, the vertices of the new mesh M' are

rarely aligned with possible sharp edges of the original mesh M.

Consequently, such sharp edges are likely to be replaced by chamfer

artifacts. We propose an automatic technique for restoring most of the

original sharp edges and corners on M' without the need to encode any

additional information.

Related papers:

- Marco Attene, Bianca Falcidieno, Michela Spagnuolo and Jarek Rossignac,

"SwingWrapper: Retiling Triangle Meshes for Better EdgeBreaker Compression",

ACM Transactions on Graphics, 22(4), pp. 1-15, 2003.

- Marco Attene, Bianca Falcidieno, Jarek Rossignac and Michela Spagnuolo,

"Edge-Sharpener: Recovering sharp features in triangulations of

non-adaptively re-meshed surfaces", In Proceedings of the Eurographics / ACM

SIGGRAPH Symposium on Geometry Processing, pp. 62-71, 2003.

Seungyong Lee (Postech, Korea)

Random-Accessible Mesh Compression

We present a novel approach to mesh compression, which we call random accessible mesh compression.

The most notable benefit of this approach is the random-accessibility of the encoded mesh,

which enables effective handling of large meshes with limited memory space.

Alex Yvart (LMC-IMAG)

Hierarchical Modeling of Smooth Surfaces of arbitrary topology

Two opposite schemes can be used in order to compute a smooth surface over a mesh :

parametric surfaces or subdivision surfaces.

Each method has its own advantages and drawbacks. Our purpose is to cumulate the advantages

of both schemes. To achieve this goal, we compute a parametric surface which is refinable

to add details and deal with multiresolution. We obtain a hierarchical structure of smooth

surfaces capable of level of details edition.

Sponsors